Mamba: Bringing Multi-Dimensional ABR to WebRTC

- Yueheng Li Nanjing University

- Zicheng Zhang Nanjing University

- Hao Chen Nanjing University

- Zhan Ma Nanjing University

The key brilliance of Mamba compared to default WebRTC.

Abstract

Contemporary real-time video communication systems, such as WebRTC, use an adaptive bitrate (ABR) algorithm to assure highquality and low-delay services, e.g., promptly adjusting video bitrate according to the instantaneous network bandwidth. However, target bitrate decisions in the network and bitrate control in the codec are typically incoordinated and simply ignoring the effect of inappropriate resolution and frame rate settings also leads to compromised results in bitrate control, thus devastatingly deteriorating the quality of experience (QoE). To tackle these challenges, Mamba, an end-to-end multi-dimensional ABR algorithm is proposed, which utilizes multi-agent reinforcement learning (MARL) to maximize the user's QoE by adaptively and collaboratively adjusting encoding factors including the quantization parameters (QP), resolution, and frame rate based on observed states such as network conditions and video complexity information in a video conferencing system. We also introduce curriculum learning to improve the training efficiency of MARL. Both the in-lab and real-world evaluation results demonstrate the remarkable efficacy of Mamba.

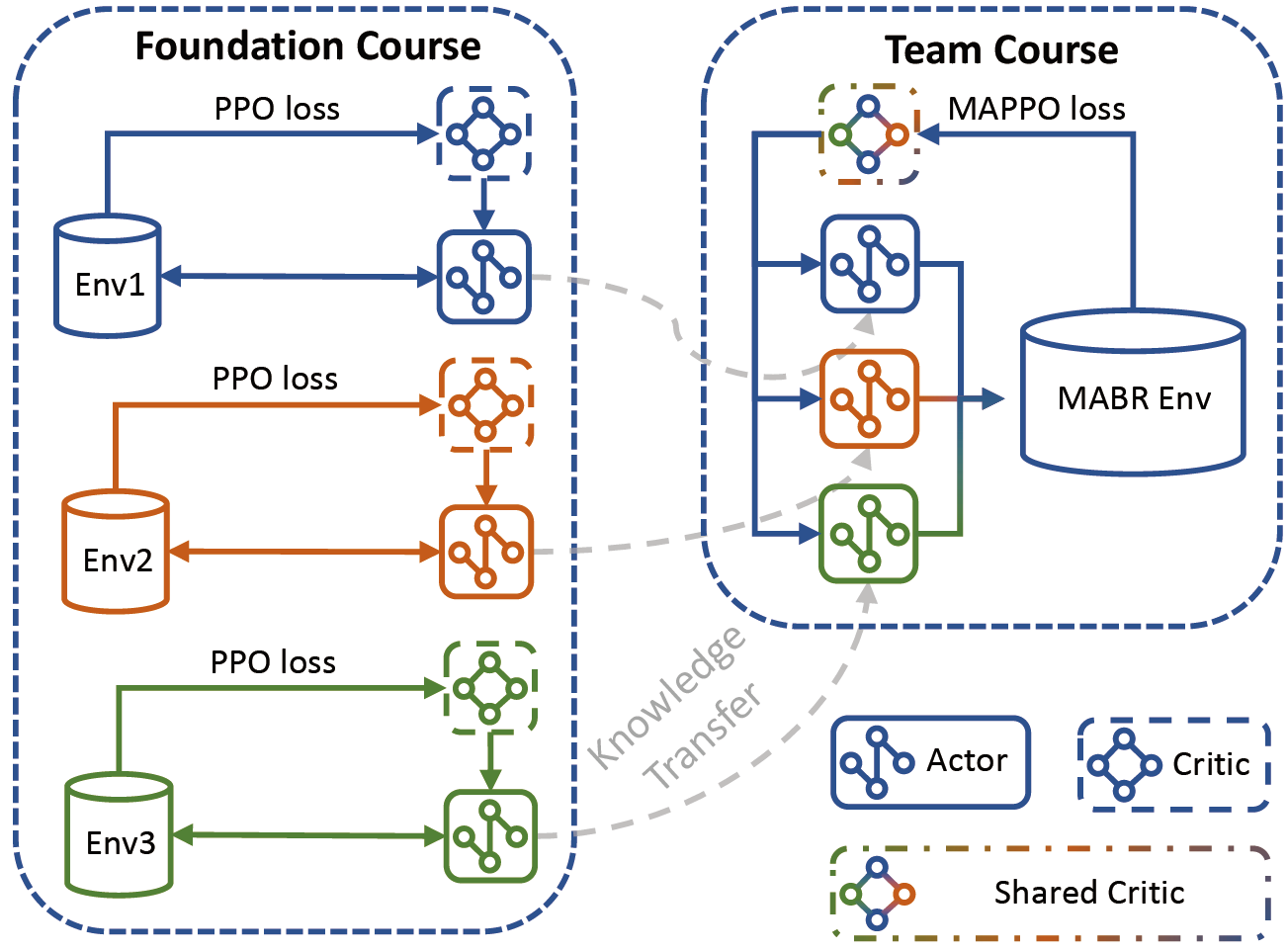

Training

To support joint adaptation of the QP, resolution, and frame rate, Mamba uses MARL to learn an end-to-end multi-dimensional ABR policy. The most intuitive idea is to train Mamba's policy directly from scratch using the existing MARL algorithm. However, it may be very inefficient with unacceptable training time, due to the inherent non-stationarity problem of multi-agent tasks. As a result, direct training from scratch can easily prevent an agent from exploring the optimal policy since it is unable to cooperate with other agents perfectly. To address the issues, we introduce curriculum learning in the training of Mamba, which has been proven to be helpful in multi-agent training. As shown, we design a two-stage curriculum for MABR learning: foundation course and team course.

System Implementation

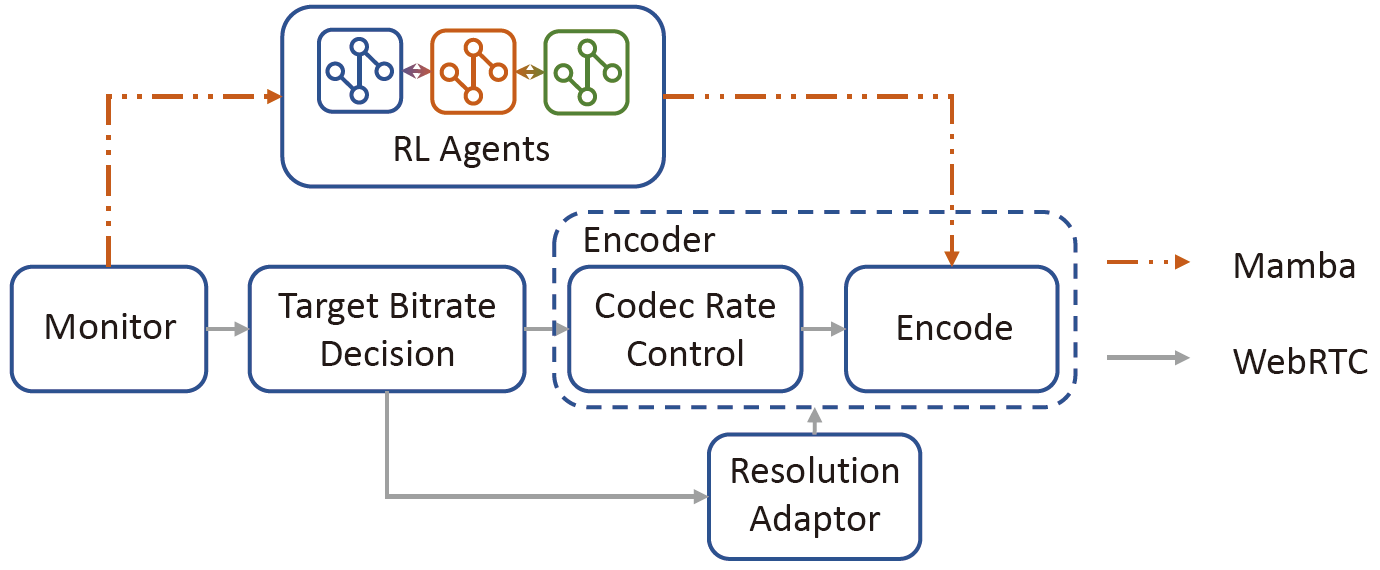

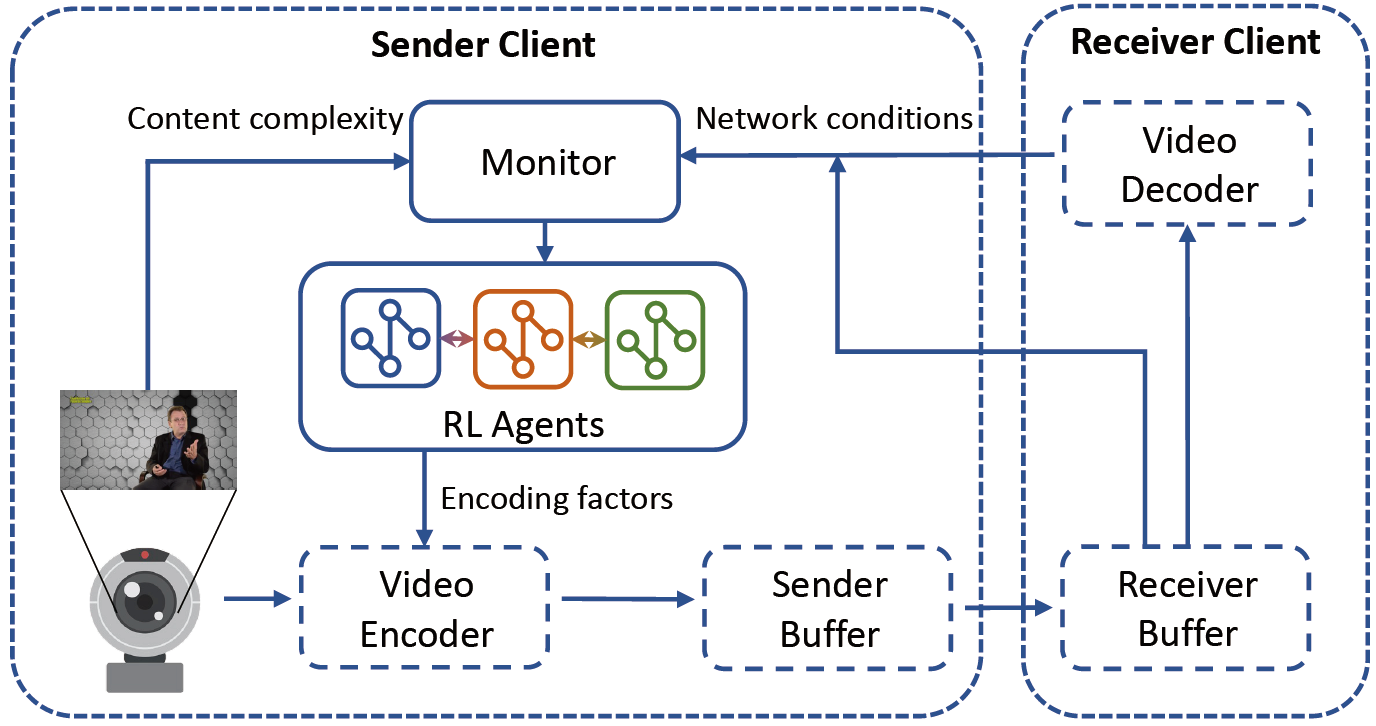

We implemented Mamba as an independent module to integrate into a WebRTC-compatible testbed, with the aim to replace the original congestion control and bitrate control modules at the system level. All of the experiments for observation and evaluation were conducted based on this testbed. The abve figure exhibits the seamless integration of Mamba into the existing WebRTC system. The modules marked by solid lines in the figure are added by Mamba, primarily consisting of Mamba agents and a monitor to gather state observations for Mamba. And the remaining modules indicated by dashed lines inherit from the original WebRTC system. The monitor module collects the observations of content complexity information and network conditions, which are subsequently input into Mamba's RL agents to make decisions on encoding factors for the video encoder.

Experiments

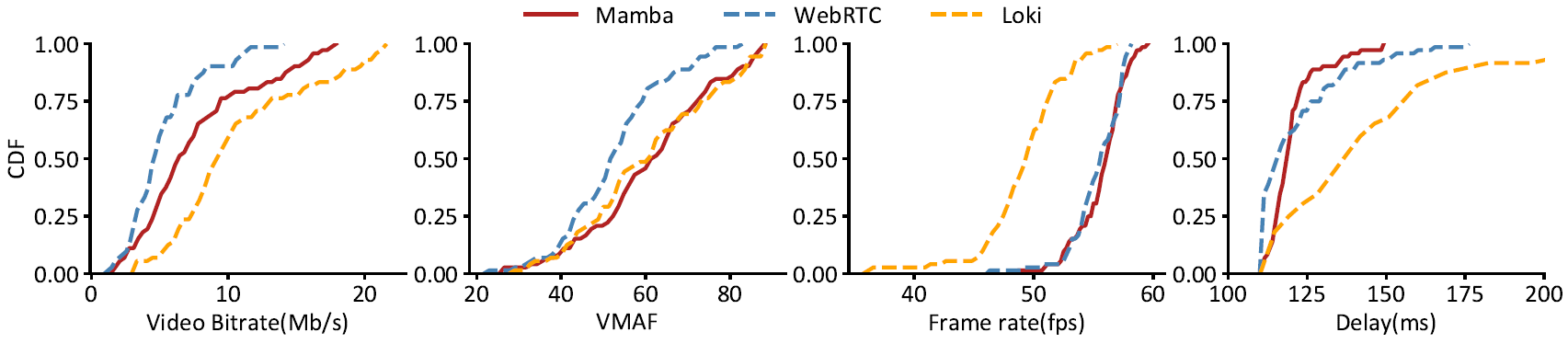

Comparing Mamba with the state-of-the-art WebRTC and Loki in the form of CDF distribution. The metrics of video bitrate, picture quality (in VMAF), playback frame rate, and delay are used to evaluate their performances. Results were collected on Orca, NYU_METS, Belgium-4G, US_5G network bandwidth trace datasets.

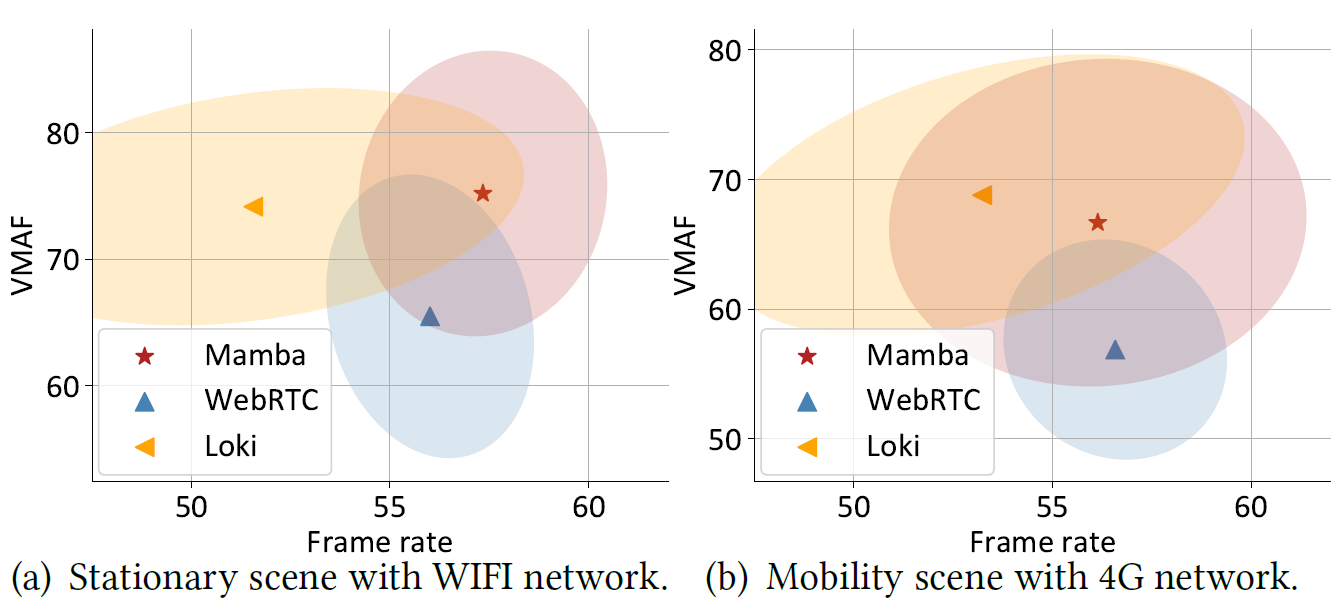

Real-world evaluation results of Mamba,WebRTC, and Loki in both stationary and moving scenarios.