A Dual Camera System for High Spatiotemporal

Resolution Video Acquisition

Vision Lab, Nanjing University

- Ming Cheng

- Zhan Ma

- M. Salman Asif

- Yiling Xu Haojie Liu

- Wenbo Bao Jun Sun

Abstract

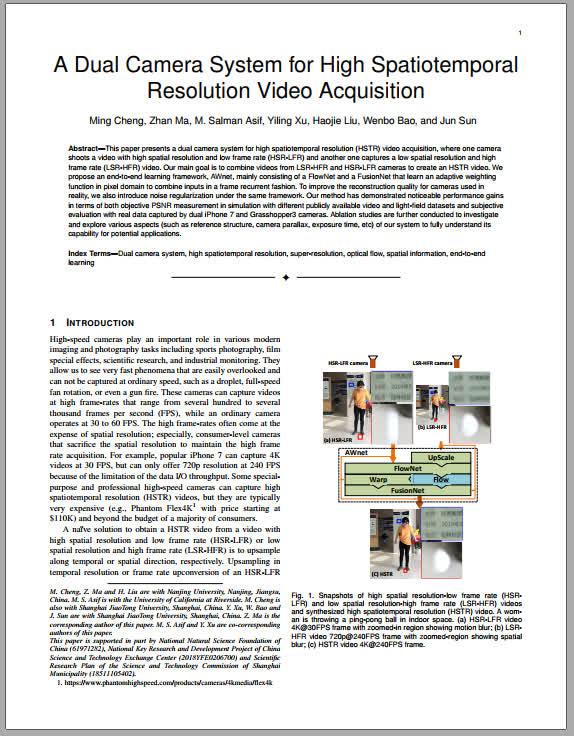

This paper presents a dual camera system for high spatiotemporal resolution (HSTR) video acquisition, where one camera shoots a video with high spatial resolution and low frame rate (HSR-LFR) and another one captures a low spatial resolution and high frame rate (LSR-HFR) video. Our main goal is to combine videos from LSR-HFR and HSR-LFR cameras to create an HSTR video. We propose an end-to-end learning framework, AWnet, mainly consisting of a FlowNet and a FusionNet that learn an adaptive weighting function in pixel domain to combine inputs in a frame recurrent fashion. To improve the reconstruction quality for cameras used in reality, we also introduce noise regularization under the same framework. Our method has demonstrated noticeable performance gains in terms of both objective PSNR measurement in simulation with different publicly available video and light-field datasets and subjective evaluation with real data captured by dual iPhone 7 and Grasshopper3 cameras. Ablation studies are further conducted to investigate and explore various aspects (such as reference structure, camera parallax, exposure time, etc) of our system to fully understand its capability for potential applications.

BibTeX

@article{cheng2019dual, title={A Dual Camera System for High Spatiotemporal Resolution Video Acquisition}, author={Cheng, Ming and Ma, Zhan and Asif, M Salman and Xu, Yiling and Liu, Haojie and Bao, Wenbo and Sun, Jun}, journal={arXiv preprint arXiv:1909.13051}, year={2019},}